As we move through 2026, the definition of "system health" has fundamentally shifted. We are no longer just monitoring static microservices; we are managing a complex, interconnected web of Agentic AI workflows, serverless kernels, and sovereign data infrastructures.

In this landscape, traditional "is it running?" checks are obsolete. Today, the focus is on decipherability—the ability to trace the reasoning of an autonomous agent or the performance of an eBPF-instrumented kernel in real-time. This guide breaks down the essential shifts in observability for 2026 and evaluates the tools helping architects maintain control.

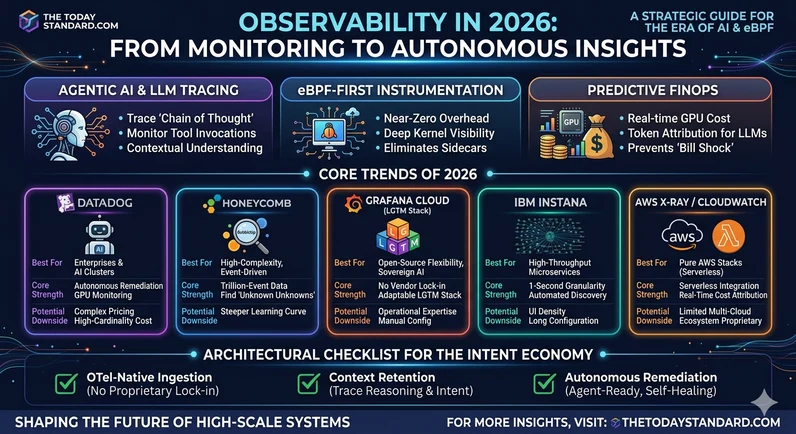

1. The Dominant Trends of 2026

The "Agentic" Observability Layer:

Generative AI was the story of 2024, but in 2026, Agentic AI—autonomous systems that take actions—is the primary focus. Observability must now capture not just the response, but the "Chain of Thought" (CoT), tool invocations, and the underlying context retrieval. Tracing a request now means following an agent as it calls multiple APIs, queries a vector database, and executes code.

eBPF-First Instrumentation:

The "Sidecar" era is ending. eBPF (extended Berkeley Packet Filter) has matured into the default for deep visibility. By running at the kernel level, eBPF-native collectors provide near-zero overhead visibility into network flows, security signals, and application performance without requiring developers to inject agents or modify code.

Predictive FinOps and Cost Attribution:

With GPU time and LLM tokens driving massive infrastructure bills, observability has merged with FinOps. Modern platforms now offer real-time "Cost-to-Insight" ratios, allowing engineering teams to see exactly how much a specific agentic workflow or high-cardinality search query is costing in real-time, preventing the dreaded "cloud bill shock."

2. Service Comparison: Selecting Your 2026 Stack

Choosing a provider in 2026 requires balancing "all-in-one" convenience against high-precision specialization.

Datadog: The Intelligent All-in-One

• Best For: Large enterprises and AI-driven clusters.

• Core Strength: Its "Bits AI" SRE assistant and autonomous agents are the 2026 leaders in automated remediation. It also offers the most mature GPU monitoring for teams scaling LLM-heavy infrastructure.

• Potential Downside: Pricing remains high and complex, especially when dealing with the high-cardinality data generated by thousands of autonomous agents.

Honeycomb: The High-Cardinality Master

• Best For: High-complexity debugging and teams using event-driven architectures.

• Core Strength: Their "BubbleUp" feature automatically identifies which attributes (User ID, feature flag, etc.) are causing anomalies in trillion-event datasets. It remains the gold standard for "unknown-unknown" debugging.

• Potential Downside: A steeper learning curve for developers accustomed to traditional pre-built dashboards; it requires a mindset shift toward exploratory querying.

Grafana Cloud (LGTM Stack): The Open-Source Standard

• Best For: Teams prioritizing flexibility and zero vendor lock-in.

• Core Strength: Built on the OTel-native LGTM stack (Loki, Grafana, Tempo, Mimir), it offers the most adaptable experience. It’s perfect for organizations moving toward sovereign AI infrastructure.

• Potential Downside: Requires more manual configuration and operational expertise to maintain high-performance visualizations compared to fully managed SaaS.

IBM Instana: The Microservices Specialist

• Best For: High-throughput microservices and cloud-native apps requiring precision.

• Core Strength: Offers 1-second metric granularity and automated discovery of all components. In 2026, it excels at capturing transient errors in rapid-fire, containerized environments.

• Potential Downside: Large-scale deployments can take longer to fully configure, and the UI can feel dense for newer developers.

AWS X-Ray / CloudWatch: The Ecosystem Choice

• Best For: Pure AWS stacks utilizing Lambda, DynamoDB, and OpenSearch.

• Core Strength: Deeply integrated into the AWS control plane with superior real-time cost attribution for serverless functions.

• Potential Downside: Limited visibility for multi-cloud strategies or hybrid-cloud environments where data spans across different vendors.

3. Architectural Checklist for the Intent Economy

When choosing your provider this year, prioritize these three pillars:

1. OTel-Native Ingestion: Does the service support the latest OpenTelemetry standards for AI and eBPF natively? Avoid proprietary agents that lock you into a single ecosystem.

2. Context Retention: In an "Intent Economy," the "Why" matters more than the "What." Ensure your tool can store and correlate reasoning traces with infrastructure metrics.

3. Autonomous Remediation: Is the tool "agent-ready"? The trend is moving toward observability platforms that don't just alert a human, but trigger an AI agent to roll back a deployment or scale a cluster automatically.

Conclusion

In 2026, the gap between a successful deployment and a systemic failure is often found in the quality of your telemetry. By embracing eBPF for efficiency and specialized tracing for your AI agents, you can shift from reactive firefighting to proactive, autonomous operations.

For more strategic deep dives into high-scale systems and the evolution of sovereign architecture, follow the latest at thetodaystandard.com. We track the tools that define the next era of technology.