Introduction: The Scale Crisis of April 2026

For nearly a decade, the sidecar pattern has been the uncontested king of Kubernetes observability. If you needed distributed tracing, service mesh mTLS, or deep network logging, you simply "injected" another container into your Pod. It was simple, modular, and effective.

But simple architectures break at unprecedented scale.

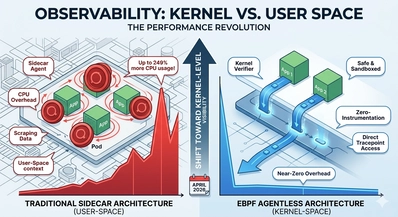

As we move into the second quarter of 2026, the complexity of cloud-native environments—fueled by massive Agentic AI workflows and hyper-distributed microservices—has exposed the sidecar's fatal flaw: The User-Space Tax.

The Problem: Why Sidecars Are Failing the Scale Test

The classic observability agent operates in the "user-space." When an application generates a network request, that data must travel from the kernel to the application, then back to the kernel, and then context-switch again into the sidecar container to be logged, filtered, or encrypted.

Every single context switch and data copy consumes CPU cycles. When you have five sidecars per Pod across ten thousand nodes, that "small" tax becomes a crippling liability.

The 2026 State of Cloud-Native Security & Observability Report validated what performance engineers have long suspected. The benchmarks are devastating: at high throughput, traditional sidecar-heavy observability frameworks are now consuming up to 249% more CPU to process the same volume of observability data than new, kernel-native architectures.

Organizations are realizing they are paying hyperscalers millions of dollars annually just to watch their applications run.

The Solution: The eBPF Kernel-Level Revolution

This is where eBPF (Extended Berkeley Packet Filter) changes everything.

eBPF allows developers to run sandboxed, verified programs inside the Linux kernel, without changing kernel source code or loading new modules. When integrated with observability, the difference is profound.

Instead of waiting for data to be context-switched up to the user-space, an eBPF program hooks directly into the kernel's network stack (via tracepoints and kprobes). It can observe every packet, every syscall, and every process execution instantly, at the moment of creation. It filters and aggregates this data within the kernel, only passing crucial summary metrics up to the user-space agent.

The Benchmark: User-Space Scrape vs. Kernel-Level Trace

To understand the difference, let’s consider a common scenario: logging high-throughput gRPC connections.

- Traditional Sidecar Approach: The application container sends 10k RPS. A sidecar agent must capture, buffer, process, and forward this traffic. The context-switching overhead causes a ~12% application latency spike and spikes node CPU usage significantly during traffic bursts.

- eBPF Agentless Approach (2026 Standard): An eBPF program running at the kernel’s entry point detects the gRPC calls, increments a counter, and aggregates metrics into a low-latency BPF map. There are no context switches for observability. The application latency spike is unmeasurable (~0%), and the kernel CPU usage is negligible.

This "Zero-Overhead" model is the standard of 2026. The heavy lifting is done by the architecture, not the agent.

How 2026 Platforms are Integrating Kernel Clarity

The shift isn't theoretical; it’s architectural. Major platforms are now deep-integrating eBPF:

- Observability Vendors: Tools that were once agent-heavy, such as New Relic’s 2026 eBPF platform, have pivoted to use eBPF for runtime visibility. They provide instant, agentless dashboards the moment their base kernel hook is deployed.

- Security Integration: The shift is happening in security too. Cloud-native security tools like Tetragon (by Isovalent) are using eBPF to not only observe but enforce network security policies in real-time within the kernel.

- Deployment Automation: Engineers are already automating the deployment of these "agentless" kernel programs using robust infrastructure-as-code tools. You can easily manage the lifecycle of an eBPF-based observability stack using AWS CDK, deploying the necessary kernel hooks as a privileged DaemonSet across your EKS clusters.

Conclusion: The Future is Kernel-Level

The cloud landscape of 2026 values efficiency above all else. The days of accepting massive CPU bloat as the price of visibility are over. The benchmarking data proves that eBPF provides the "zero-instrumentation" clarity that sidecars cannot match.

If your 2026 architecture still relies on user-space agents to watch kernel-level events, you are burning money on infrastructure and sacrificing performance. The future of cloud-native engineering is moving down into the kernel.